- Blog

- I just got a letter randy orton theme song

- Old super mario bros wii hack

- Ingress apk files

- Fire emblem awakening citra iso

- Philips webcam spc230nc windows xp

- Stop mobile intel 965 express chipset family driver from install

- Rihanna love on the brain writer

- Prince of persia iso psp download free

- Ai deepfake app nude images

- Netscape navigator web browser

“If someone is really struggling to be a victim, it undermines their resilience,” he says. Dodge says women may wonder if the content is not genuine and whether it deserves to be traumatized and should be reported. It can be even more complicated than revenge porn. “To date, I’ve never succeeded in permanently deleting an image. Potential romantic Relationship, “says Martin. All the job interviews you’ve done so far, this may lift. Images and videos are difficult to delete from the internet and you can always create new material.

#Ai deepfake app nude images full#

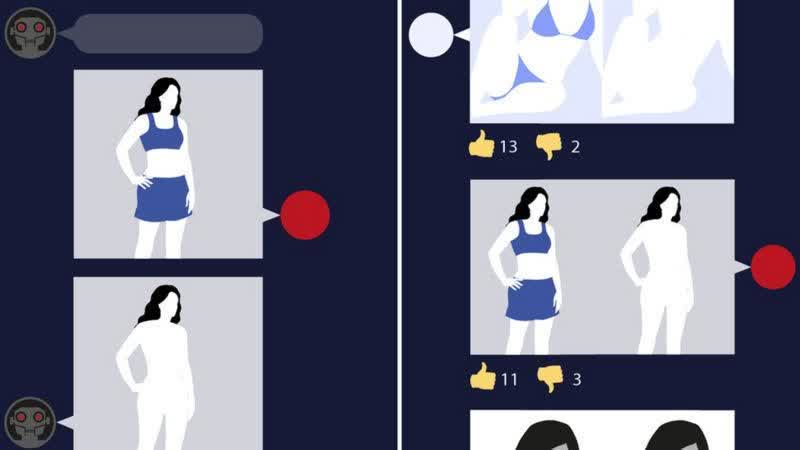

The user can then select any video, generate a preview of the result of the face swapping within seconds, and pay a fee to download the full version.Īnd the effects can last a lifetime for the victim. The majority feature women, but a few also feature men, mostly gay porn. When a user uploads a photo of their face, the site opens a library of porn videos. “Whenever we specialize in that way, it creates a new corner of the Internet that attracts new users,” says Dodge. Adam Dodge, founder of EndTAB, a non-profit organization that educates people about technology-based abuse, says creating pornographic images of people without consent is “made-to-order.” This allows creators to easily improve the technology for this particular use case, attracting people who wouldn’t otherwise have considered creating deepfake porn. But as the first dedicated porn face swapping app, Y takes this to a new level. There are other single photo face swapping apps like this: ZAO Or ReFace places the user in a scene selected from mainstream movies and pop videos. According to the researcher Genevieve Oh, who discovered it, the latest such site received more than 6.7 million visits in August.

#Ai deepfake app nude images code#

Since then, many of these services have been forced to go offline, but the code still exists in open source repositories and continues to reappear in new formats. Many easy to use as technology advances No code tool It also allows users to “peeling” clothes from the woman’s body in the image. To date, research firm Sensity AI estimates that 90% to 95% of all online deepfake videos are non-consensual pornography, and about 90% of them feature women.

The original Reddit creator who popularized the technology replaced the face of a female celebrity with a porn video. From the beginning, for deepfake, that is, AI-generated synthetic media Mainly used to create pornographic expressions Often for women who find this psychologically devastating.